Could We Create Binary Diffs for OpenStreetMap Planet Files?

Due to the recent spike in OpenStreetMap planet dump traffic, some people have wondered why so many users are redownloading the whole planet every week. The unfortunate truth is that applying replication diffs takes a long time1 and is not 100% reliable.2 I was wondering whether creating, distributing and applying binary deltas could help here.

The OSM PBF format consists of a sequence of blocks with data inside. Usually per block there is one kind of objects: nodes, ways or relations. Blocks can be up to 232 bytes in size3, but usually capped at a much, much smaller size. The planet file currently consists of more than 14000 blocks, so every block is on average around 4 MB in size.4

If we assume that most changes are in fact additions to OSM, most changes would happen at the end of every section of the respective kinds of objects. This would mean that most blocks at the beginning would stay the same, as they rarely change and thus their delta would be zero.

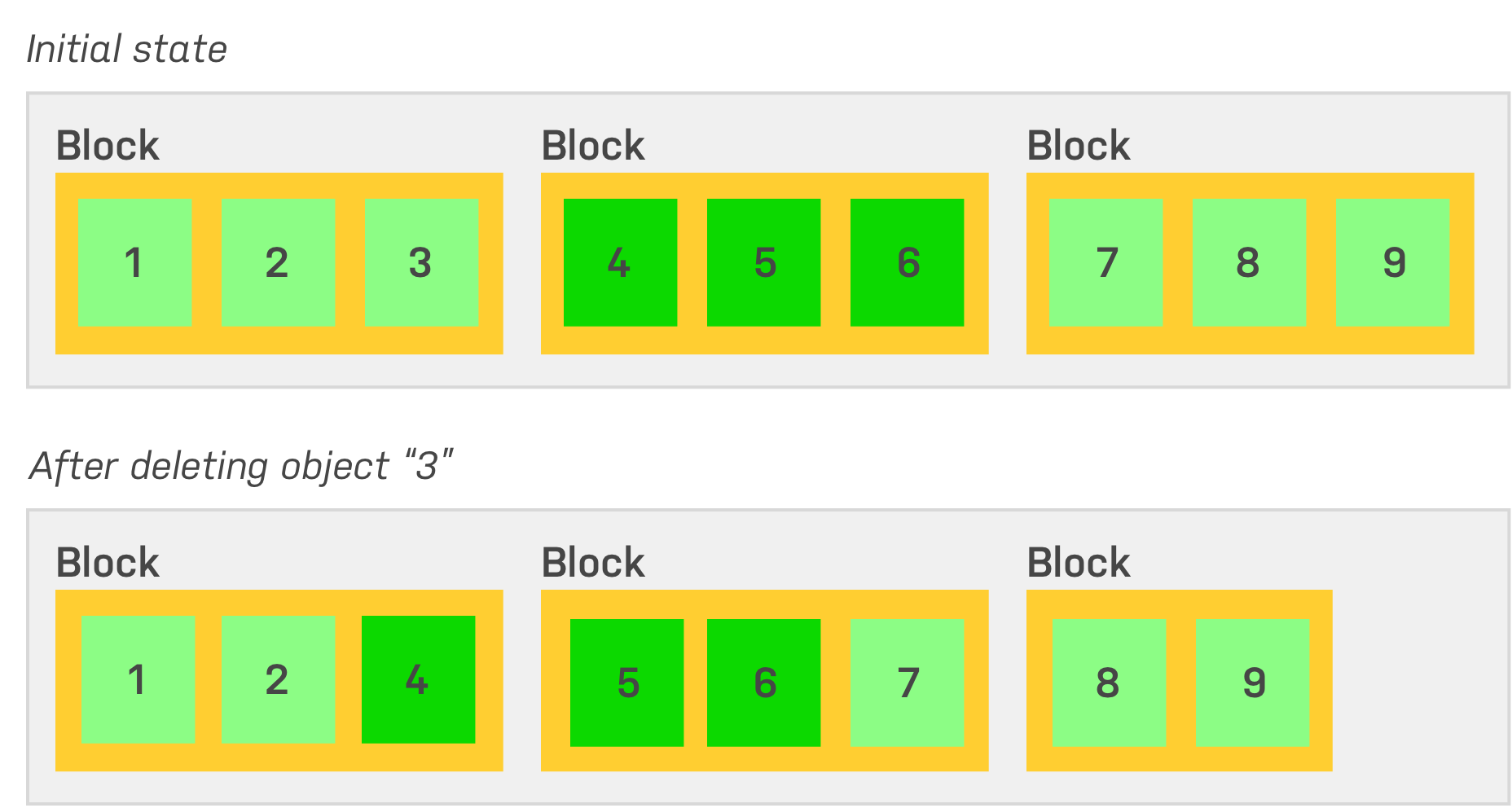

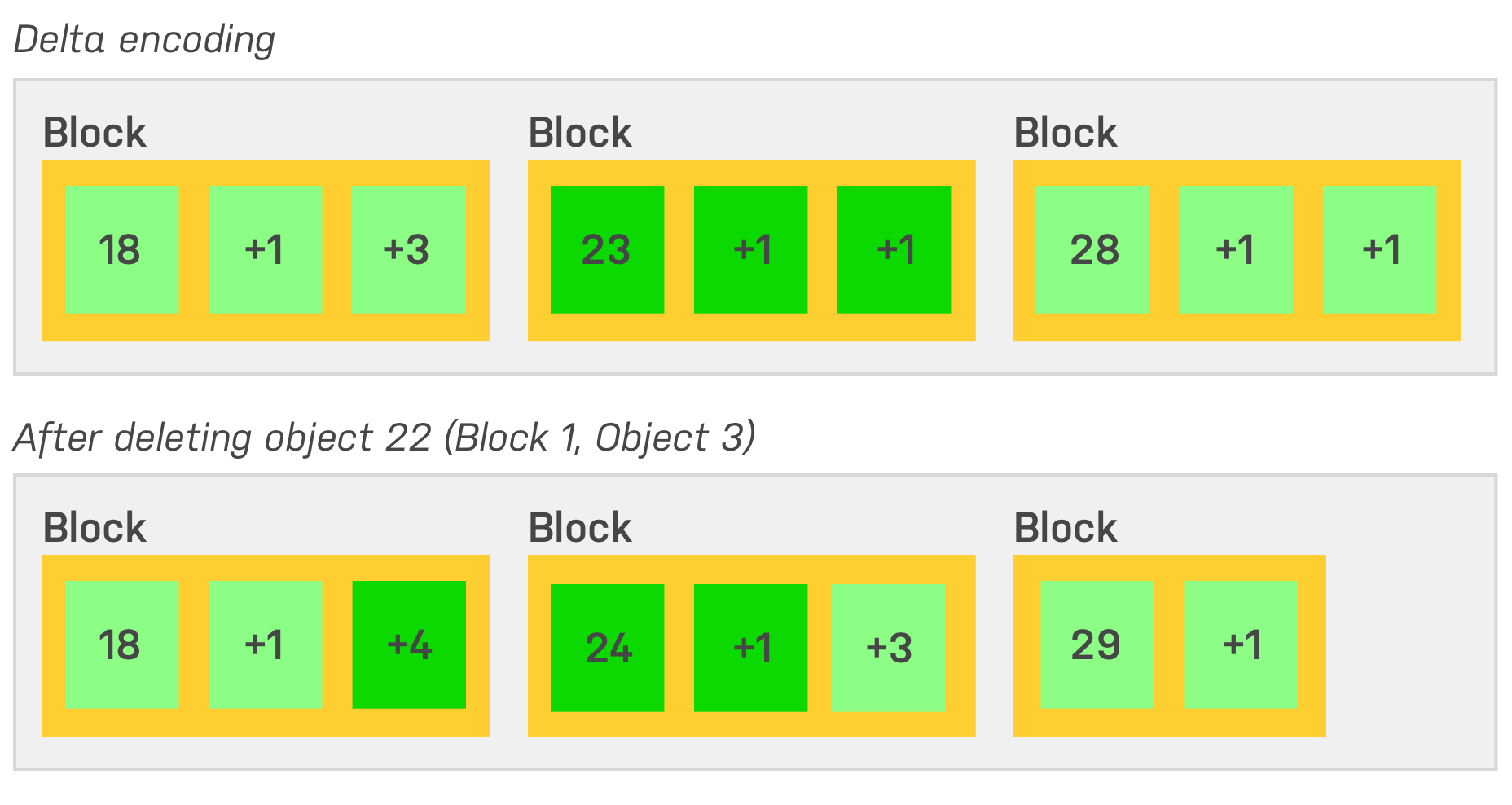

The reality is quite different, unfortunately: Deleted objects move the position of all following objects inside the file, so changes cascade to every subsequent block:

It becomes very clear that with any change the block will look quite differently. Now, if we compare the planet from 2020-01-20 with 2020-01-27 there is zero blocks that stayed exactly the same. Of course this highly depends on the file packaging, e.g. when tested with an extract of Bremen5, 2020-02-01 and 2020-02-02 around 30 % of the blocks stayed the same.

The impact of shifting/cascading gets amplified by the used marshalling: In addition to the heavy use of delta encoded varints inside the file format, every block is compressed using zlib, so small changes can have an even more significant impact on the final block.

A final option is to compare the changes of every block in an uncompressed state: Let’s walk through two files and generate a blockwise binary diff.6

But even after decompression, the differences are too significant: The decompressed blocks are around 8.5 MB4, while the (binary, compressed) deltas between weekly versions are on average 2 MB. A blockwise weekly diff would be around half the size of the full planet, plus any new data at the end. Also, generating and applying binary diffs is far from being fast.

The diffability could be improved by keeping blocks as similar as possible, e.g. by removing deleted objects without allowing subsequent ones to move forward. But this would come at a cost of higher complexity during OSM PBF assembly. Furthermore deleted objects would not reduce file size.

Conclusion: With the current file format, there is no simple alternative approach and sticking to replication diffs is the only viable option at the moment.

Bonus Question: “Could we reduce file sizes by using a different compression algorithm?”

OSM PBF nowadays uses zlib compression which has a good compression ratio for data blocks.7 But how about the newer Zstandard compression, which promises smaller files than zlib at a much higher performance?

Bremen

| Compression Ratio | Decoding Duration (cumulated) | |

|---|---|---|

| zlib | 2.28x | 464 ms |

| zstd | 2.40x | 169 ms |

Planet8

| Compression Ratio | Decoding Duration (cumulated) | |

|---|---|---|

| zlib | 2.36x | 1306 s |

| zstd | 2.46x | 383 s |

This means that a 50 GiB planet file could be reduced by around 2 GiB while significantly improving read performance by using Zstandard. Such a change would not be a simple task: Zstandard compatibility would have to be implemented in a significant number of applications and libraries.

Whether such a change is worth the speed-up is questionable, since most of applications need to assemble geometries from OSM data, which is the biggest performance bottleneck in most usecases.

Around an hour on a quad core system with good internet connectivity and spinning hard disks. ↩︎

Diffs break sometimes, if for example very large changesets are uploaded and the processes that generate or apply the replication diffs exhaust their resources or run into timeouts. ↩︎

See fileformat.proto,

message Blobheader. Please notice that this describes the zlib-compressed size. Average compression ratio is around 2x. ↩︎On an OSM.org planet file, generated using planet-dump-ng ↩︎ ↩︎

Extract from Geofabrik, generated using osmium ↩︎

By the way, don’t try to run

bsdiffon a planet file, the expected memory usage is around 900 GB. ↩︎Fluctuates across the file (relations have a higher ratio than nodes), but averages around 2x. ↩︎

Tested on a relatively busy quad core Intel Core i7-2600 with spinning hard disks. Compression level for Zstandard was set to 20 (zstd supports up to 22), zlib compression level of OSM planets is set to 9 (maximum compression). Code from gosmparse has been used to perform the benchmark. No parallel decoding has been peformed. ↩︎